This research addresses the “Paradox of Disclosure” — where both too little and too much transparency reduce user trust.

BACKGROUND AND PROBLEM

Generative AI can improve newsroom efficiency but often hides how it shapes stories.

Generic disclosure labels do not help the users, detailed traces overwhelm them, and editors need practical tools to disclose AI without breaking workflow.

THE PROCESS

The search for answers began

I did this project in three phases from research to evaluation.

Research Phase

Expert interviews and thematic analysis to identify challenges in AI transparency and trust

Design Phase

Speculative design and feature prioritisation to create three adaptive UX prototypes.

Evaluation Phase

Heuristic review, think-aloud testing, and value alignment checklist.

MY RESEARCH LED ME TO A RABBIT HOLE!

Introducing the paradox of disclosure!

If we under-disclose, users feel manipulated or misled. If we over-disclose, they get overwhelmed—what we call transparency fatigue

We’ve seen both in the industry already. So, the solution can’t be ‘more transparency. ’

HOW I DEALT WITH IT?

Converted the paradox in 5 workable pillars

From nine expert interviews (129 years of experience), five pillars emerged—capturing the tug-of-war between usability, trust, and editorial control.

Cognitive Fatigue

Too much AI detail creates cognitive fatigue, not confidence.

Editorial Control

Newsroom's ability to manage and oversee the involvement of AI in content creation

AI Litracy

Many readers don’t understand how AI works.

Human Expectations

Users generally expect journalism to be human, and AI disclosure can inadvertently erode this illusion of authorship

Verifiability

Even with human oversight, users crave auditability.

Design explorations

The three prototypes targeted a different transparency challenge.

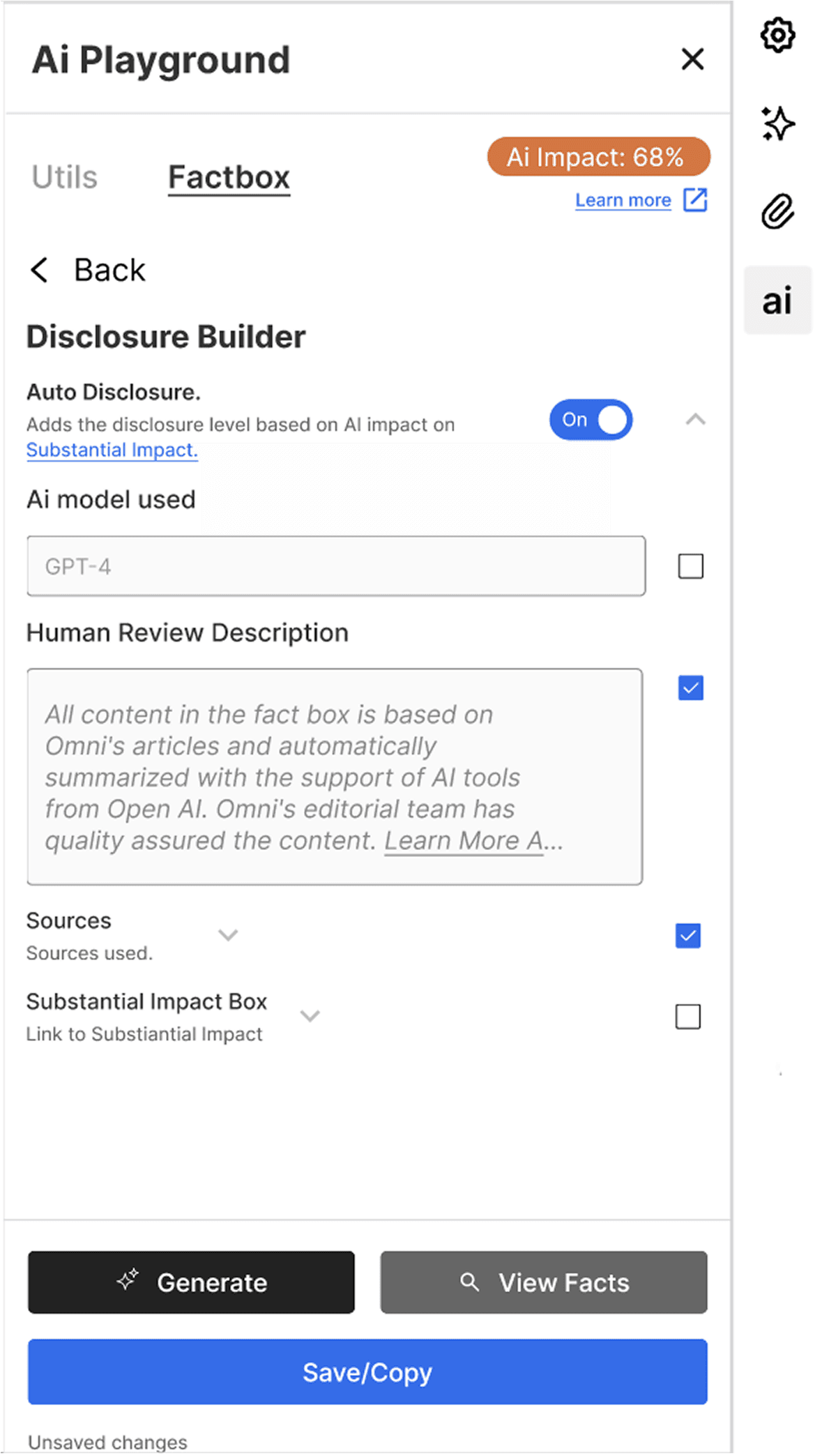

CMS Disclosure Builder

Human Expectations

Editoral Control

Verifiability

Chatbot “Hej Afton”

Cognitive Fatigue

Verifiability

AI Literacy

Newsrooms use AI to create fact boxes alongside news stories—transparent, traceable, and verified before reaching readers.

Due to regulations and editorial policies, AI use in newsrooms is always mediated by human oversight.

It works well, but something felt incomplete

transparency didn’t fully extend to how AI reasons or adapts to nuance.

Same disclosure used for all articles

No specifics on how AI was used in each story

AI-generated factboxes can subtly shift meaning

No flexibility to add or adjust context per article

Suggested Features in the CMS

These features had their own pros and cons which were discovered after evaluation

Let users see how answers are formed and which sources were used.

Offer options like Neutral, Contextualized, or Skeptical.

Allow users to refine responses by domain, e.g., Politics or Science.

AI Impact: “68%” felt too technical— in future need to use clearer labels like “Mostly AI-written.”

Discoverability: Key settings were hard to find need to be made them more visible.

Source Clarity: Need to add clearer explanations for source links to improve trust.

Current Chatbot

Hej Afton is Aftonbladet’s AI chatbot powered by GPT-4o and verified archives—handling news, sports, and entertainment in 50 languages.

Its good for objective news like sports but it lacks a few things

With simple quieres users sometimes did ask complex questions

Users cannot see how the AI arrives at its answers, what information it considers, or prioritized.

The chatbot offers a single response style, without options to switch between tones such as Neutral, Contextualized, or Skeptical.

Users cannot choose between a brief summary and a detailed “Full Trace” that shows the reasoning steps and supporting sources.

The system doesn’t allow users to refine responses based on different domains which limits contextual relevance.

Instead of teaching AI mechanics, CoT gives users a peek behind the curtain

Clarity without cognitive load. Some examples from industry which i took inspiration from.

FINAL DESIGN

The final feature is fairly simple thinking button for complex questions

Let users see how answers are formed and which sources were used.

Offer options like Neutral, Contextualized, or Skeptical.

Allow users to refine responses by domain, e.g., Politics or Science.

Accessibility: The logic felt too complex—use simpler language and visuals to make it easier for everyone to understand.

FINAL DESIGN

Integrated within the current chatbot, it opens from the settings icon to filter trusted sources and preferred formats.

Users may not see how filters affect results, and stronger safeguards are needed to prevent unclear or excessive content control.

FINAL DESIGN

A control above each article, letting readers switch between text, audio, or video while keeping content consistent and editor-approved.

It gives users freedom to adjust tone, sources, and focus—personalized without breaking Aftonbladet’s editorial integrity.

FINAL VERDICT!

Three design prototypes validated through heuristic review and think-aloud testing. Findings translated into five actionable transparency pillars, presented as guidelines for editorial AI deployment.

the following are the key takeaways!

Quantify Substantial Impact

Make AI involvement measurable and explainable instead of guesswork

Explain but only on demand

Give more detail only when users want it and when the story requires it (Contextual disclosures)

Editor control

CMS tools should let editors quantify and humanize AI contributions.

Measure impact

Track reading-time, correction rates, and trust metrics after deployment.